Whether ELT replaces ETL depends on the use case. While ELT is adopted by businesses that work with big data, ETL is still the method of choice for businesses that process data from on-premises to the cloud.

Globally, 97 zettabytes of data will be produced, stored, exchanged, and used in 2022, and that number will rise to 181 zettabytes in 2025. This expansion is anticipated to last well into the foreseeable future after 2025. Source – Statistica

It is obvious that data is expanding and pervasive. As a result, the demand for innovative processes to accurately gather, organize, and interpret data will also increase. Data-driven enterprises will have the opportunity to further data engineering practices. Under data engineering, data pipelines are formed by utilizing data integration strategies on disparate data from the source.

On the same line, there are two data integration strategies – ETL (Extract, Transform, and Load) and ELT (Extract, Load and Transform).

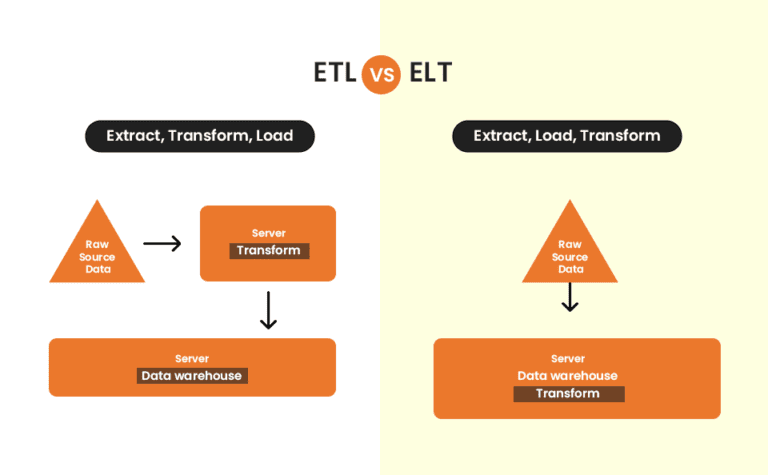

Differences in data integration procedures

The fundamental difference between the two data pipelines is ETL transforms data on a separate processing server, while ELT transforms data within the data warehouse itself.

In the area of data integration, the transform stage is a game-changer for both ELT and ETL. Let’s assume that the questions that data consumers seek clarification are on: How many people have visited my website’s contact page in the past three months?

In an ETL data integration process, data engineers often take website visitor data (source data), extract it, and transform the data in accordance with a set of specifications, such as what we can accomplish with this data, where we can store it, and how it can help other departments.

However, ETL’s high initial cost is one of its most obvious disadvantages. In the case of an on-site data storage, the cost will be in the hundreds of thousands of dollars range. The initial cost will be quite significant because data engineers must start by designing a transformation algorithm, even if they use cloud-based storage.

When using ETL, the process of transforming data before loading it into the storage system can be somewhat time-consuming. Fortunately, it does have certain benefits. The data is already pre-structured as it is loaded, making analysis quick and simple.

On the other hand, data engineers store a lot of raw data, therefore ELT is perfect for broad-based analytics. They can both perform extensive analyses of historical data or run very small adjustments to obtain reports about particular topics. This is prohibited by ETL because it doesn’t keep raw data.

Apart from the differences in the data integration processes, companies choose ELT or ETL on the basis of their preference. Let’s examine this in more depth.

The key distinction between ETL and ELT

Order of Process

Users of the ETL methodology must plan ahead and decide which data items should be extracted for further transformation and loading. However, ELT allows for immediate data extraction and loading. Users can choose which data to change and research later.

Data can be retrieved from proprietary databases, old computing systems, cloud settings, sales applications like CRM systems, any data storage platform, and other types of ERP systems during the extraction process.

Flexibility

ETL is typically a defined procedure created to support a very specific usage. Many healthcare enterprises are moving their data and applications from on-premises to the cloud to secure their data, save money and make their applications more scalable. ETL is frequently used to carry out these migrations.

HANDPICKED CONTENT

Before diving into the differences, Please read Data Engineering Services to better understand ETL and ELT in detail.

On the other hand, ELT makes data readily available for self-serve data analytics. Examples include large-scale weather reporting enterprises which need real-time access to current data for immediate access to business intelligence.

Dealing with Big Data

ETL processes traditionally handle structured relational data and on-premise computing resources handle the workflow. As a result, the data\’s scalability becomes an issue. An example of ETL real-life use cases can be Electronic Health Record (EHR) data extraction when data engineers have to transfer structured relational patient data from legacy EHR systems and then transform it into a fitting format for the new system.

Additionally, ELT readily addresses the scalability problems brought on by big data. To solve the scalability problems, it uses on-demand cloud services. For example, eCommerce businesses with high numbers of transactions need to be compiled and examined. Retailers may require the data to target their loyal customers or to get access to real-time data immediately so they may deliver personalized marketing campaigns to their customers.

Data Privacy or Time to Insight

Modifying an ETL data pipeline requires time and effort. This explains why users must hold off on making the requested modifications until the development team does. If security is more important to a financial firm than speed, then ETL can be used as their data integration technique.

Financial institutions must continue to adhere to General Data Protection Regulation (GDPR) and California Consumer Privacy Act (CCPA) Security Rule. They can therefore choose to use ETL to conceal, encrypt, or scrub sensitive data before uploading it to the cloud.

ELT provides more agility, with some training and modern analytics applications, end users can easily connect to and experiment with unstructured data, visualize the insights on a dashboard, and run predictive models.

For instance, a shipping company that uses marine telematics system devices to track its vessel positioning may need to process huge volumes of diverse data generated by sensors, video recorders, GPS trackers, etc. The data grows as the number of vessels grows hence the data processing should be steadfast. However, this data integration technique requires great resources as well as investments. ELT is the best approach to cutting costs while improving performance.

Conclusion

After examining the distinctions between the two data integration methodologies, the typical query, Is ELT Replacing ETL? arises. The application is what determines the answer. It is preferable to remain with ETL if your organization utilizes a legacy system. The ELT data integration technique is preferable if you are working on large data and cloud projects or hybrid architectures.

However, another way to look at it is ELT may replace ETL in the future as data is expanding and modern cloud solutions are progressively replacing traditional ways of storing data. Given its many advantages over ETL, ELT appears to be the logical choice for creating efficient data flows. ELT is a low-maintenance, versatile, and cost-effective technology. It works for companies of various sizes and industries. Organizations can trip over ETL, an antiquated and slow process that has a tonne of hidden potholes in its path to data integration. However, as the use cases show, ETL cannot be totally substituted.

Want to know more? Talk to our experts