Why this blog?

Many enterprises don’t have an analytics problem. They have a data pipeline problem. Dashboards exist, yet teams still argue over numbers. Reports take days. Analysts manually reconcile data across systems. Trust in reporting slowly erodes.

This blog explains where reporting failures actually originate and how a governed pipeline architecture resolves them. Using an Alteryx and Tableau implementation, it provides a practical blueprint for turning reporting environments into reliable decision intelligence.

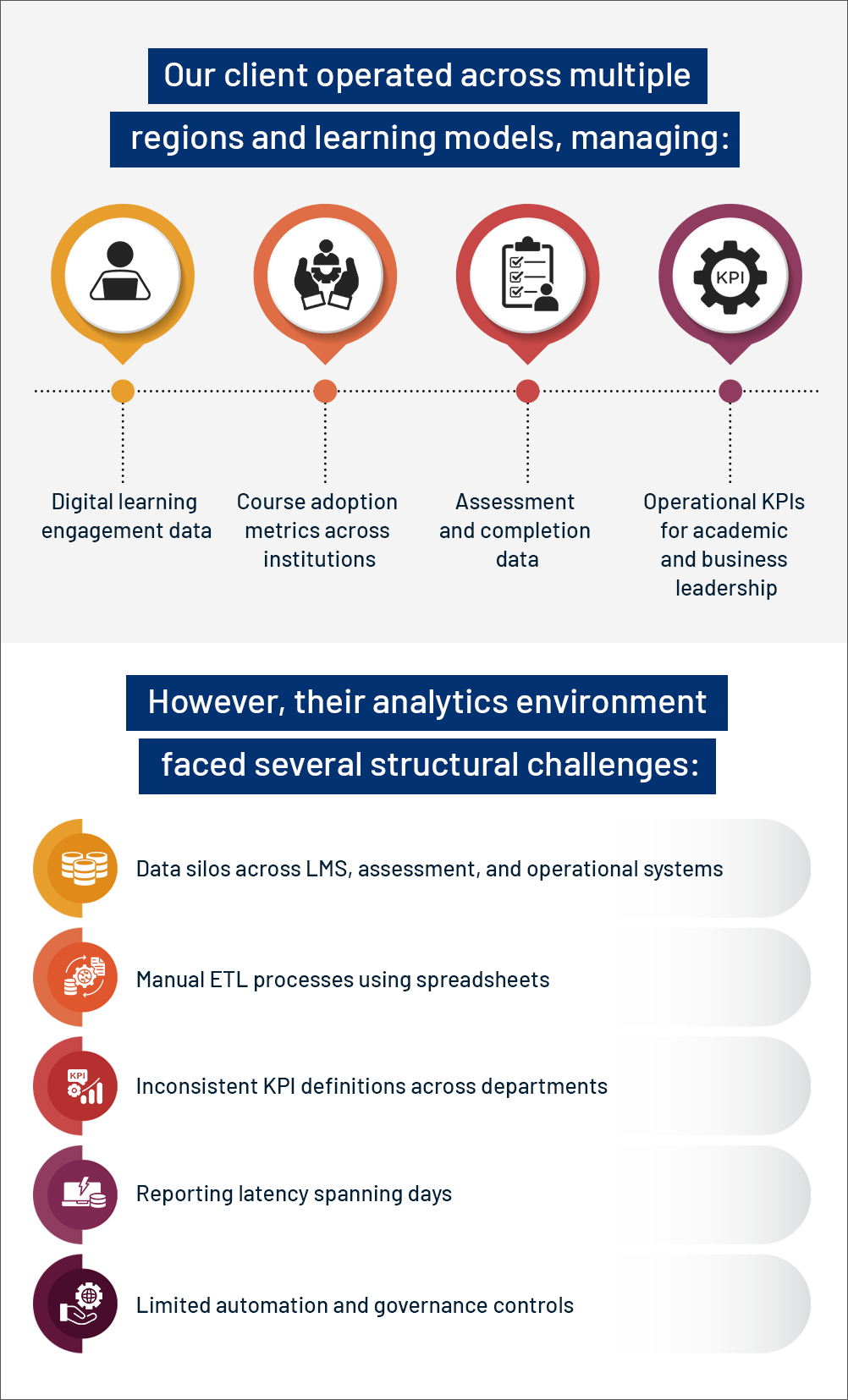

In today’s digital-first education landscape, institutions generate massive volumes of data, from student engagement and course adoption to assessments and operational performance. Yet, having data is not the same as having insight.

Many large education providers struggle with fragmented systems, manual reporting cycles, inconsistent metrics, and delayed decision-making. The result? Analysts spend more time reconciling numbers than enabling outcomes.

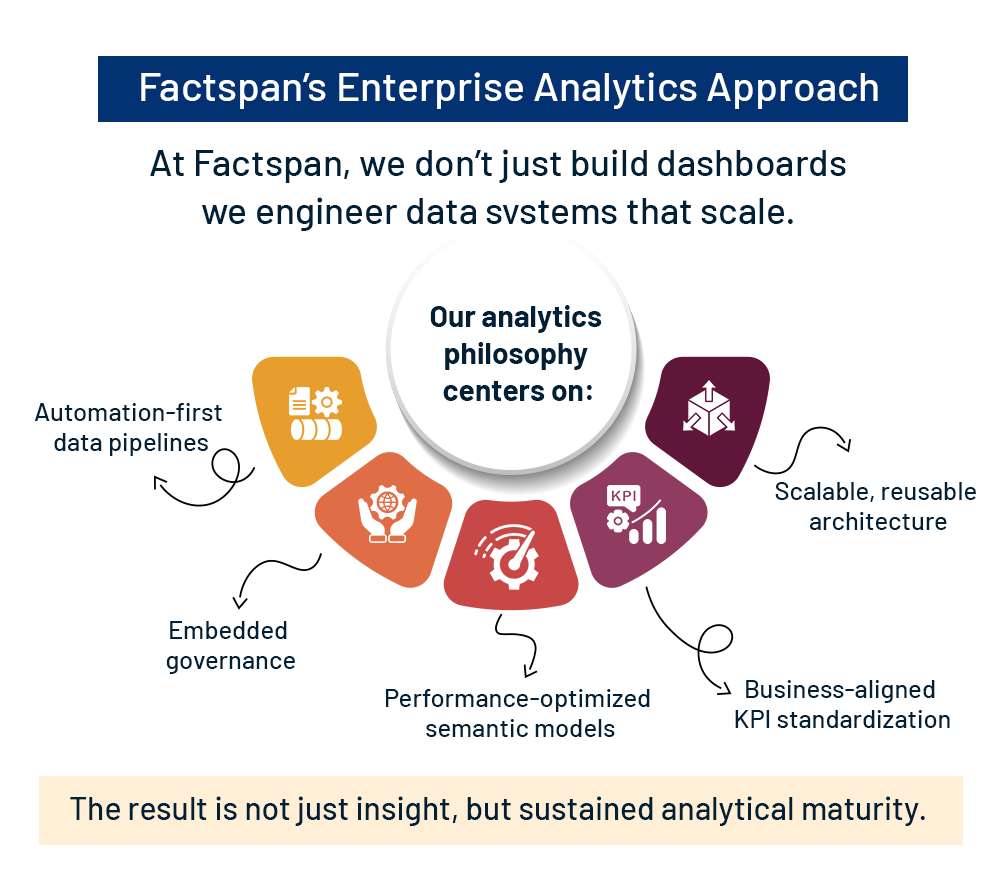

At Factspan, we partnered with a leading global education provider to design an enterprise-grade analytics ecosystem, one that unified data, automated pipelines, and delivered governed insights at scale.

What’s the Challenge?

Before evaluating fit, let’s establish clear definitions:

Traditional Automation executes predefined, deterministic workflows. If-then logic, rule-based routing, structured data processing. Predictable, auditable, reliable.

GenAI-Assisted Workflows augment human or automated processes with content generation, classification, summarization, or analysis. They respond to prompts but don’t orchestrate multi-step actions autonomously.

Agentic AI observes environments, formulates plans, executes multi-step actions across tools and APIs, and self-corrects based on feedback. These systems are goal-driven, not just reactive, they make decisions, handle exceptions, and adapt without constant human direction.

The critical distinction: agents introduce autonomy, which comes with both power and risk.

Where does Agentic AI Fails in Enterprise Reality?

Fragmented Data, Slow Insights

The organization needed more than dashboards — they needed a scalable analytics backbone.

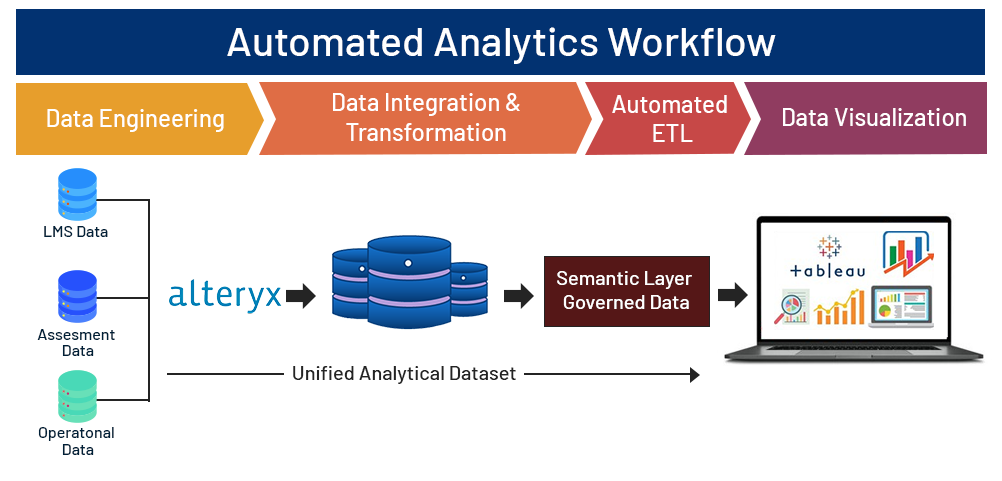

The Solution: Architecting an Automated Analytics Pipeline

We designed a modular, automated, and governed analytics framework leveraging:

- Server-based automation for refresh scheduling and governance

- Alteryx for data engineering and ETL orchestration

Below is a visual overview of the architecture:

Step 1: Centralized Data Engineering with Alteryx

Using Alteryx workflows, we:

- Integrated LMS, assessment, and operational data

- Applied schema standardization and validation checks

- Built a unified analytical dataset

Tools leveraged included:

- Input & Dynamic Input tools

- Join and Union transformations

- Multi-Row Formula for time-based trends

- Summarize tools for cohort-level aggregations

- Batch macros for parameterization

This created a single source of truth (SSOT) for enterprise reporting.

Step 2: Enterprise-Grade Data Governance

- Business logic was embedded directly into ETL workflows

- Engagement, completion, and adoption metrics were standardized

- Validation macros enforced KPI definitions

- Data profiling ensured schema and null consistency

By governing metrics at the pipeline level, we reduced downstream reconciliation efforts drastically.

Step 3: Automation & Orchestration

Workflows were deployed via server scheduling:

- Automated refresh cycles

- Incremental data updates

- Failure monitoring and alerting

- Version-controlled workflow management

Reporting latency reduced from days to near real-time refresh capability.

Step 4: Semantic Modeling & Visualization in Tableau

With clean, governed data in place, Tableau enabled intuitive consumption.

Semantic Modeling Capabilities

- Extract-based (.hyper) architecture

- Level of Detail (LOD) expressions for cohort analysis

- Context filters for performance optimization

- Row-Level Security (RLS) for institutional access

- Parameter-driven drilldowns

Executive & Operational Dashboards

Dashboards empowered stakeholders to:

- Monitor engagement trends over time

- Benchmark institutional performance

- Analyze completion and retention rates

- Identify early intervention opportunities

Complex educational data became actionable intelligence.

What was the Real World Business Impact?

The transformation resulted in:

✔ Reduced reporting turnaround time

✔ Unified KPI definitions across departments

✔ Elimination of manual reconciliation

✔ Improved trust in analytics outputs

✔ Faster, data-driven decision cycles

More importantly, the organization shifted from reactive reporting to proactive performance optimization.

Enterprise Analytics Matters in Education

Education ecosystems are inherently complex. Institutions manage multi-dimensional learner cohorts across departments, programs, and campuses, while operating within rigid, time-based academic cycles. They also function through layered administrative hierarchies, and require frequent data refreshes to keep reporting, compliance, and operational decisions accurate.

Without automation and governance, analytics becomes fragmented and unreliable. By combining structured ETL automation with governed BI architecture, we engineered a scalable analytics foundation capable of evolving alongside institutional growth.

Enterprise-grade analytics requires more than visualization tools. It demands a structured, automated, and governed ecosystem that transforms raw data into decision intelligence.

By integrating Alteryx-driven ETL workflows with Tableau-powered visualization, we delivered a resilient analytics backbone that empowers leaders to act confidently and strategically.

Struggling with slow reports or inconsistent metrics?

We help design governed data pipelines that turn analytics into reliable, scalable decision intelligence.