Medical imaging, such as computed tomography (CT), magnetic resonance imaging (MRI), positron emission tomography (PET), mammography, ultrasound, and X-rays, has grown in importance in recent decades. Disease detection, diagnosis, and treatment are critical in early medicine. Human experts are primarily responsible for interpreting medical images in clinics (such as radiologists and physicians).

However, because of dramatic changes in pathology and the potential for fatigue from human experts, researchers and clinicians have only recently begun to benefit from computer-assisted interventions advancement in computational analysis of medical images are late in comparison to that of medical imaging technology, it has started to see more traction with the help of machine learning techniques.

Diseases with a high occurrence rate, such as brain tumors, Alzheimer’s disease, neurological degeneration, and cerebral aneurysm, or some very common types of cancer like prostate, breast etc. have piqued the interest of ML researchers all over the world. Machine Learning will most likely transform how we observe patterns in data and how it can be used to treat brain health over the next decade. This article looks at some real-world applications of this emerging revolution.

Machine Learning and Deep Learning in Medical Image Analysis

Machine learning (ML) and deep learning (DL) models are gaining popularity in biomedical research and clinical practice, showing potential in various applications such as risk modeling and stratification, personalized screening, diagnosis, prediction of response to therapy, and prognosis.

By integrating data from various sources, including medical imaging, disease risk factors, multiomics data, therapy procedures, and follow-up data, these advances have the potential to have a significant clinical impact, leading to high-performance healthcare services and the convergence of human intelligence and machine learning.

Traditional approaches

Traditional research in machine learning for image classification primarily uses pre-trained deep learning (DL) models such as ResNet (Residual Network) and VGGNet (Visual Geometry Group).

ResNet is a type of convolutional neural network used for image classification. It is a gateless or open-gated variant of a previous DL model. The residual connections enable ResNet to learn and propagate information far deeper than the previous neural networks. The VGG architecture consists of blocks, where each block is composed of 2D Convolution and Max Pooling layers as these allow to deeply train the model with the option of two- or three-layers technique.

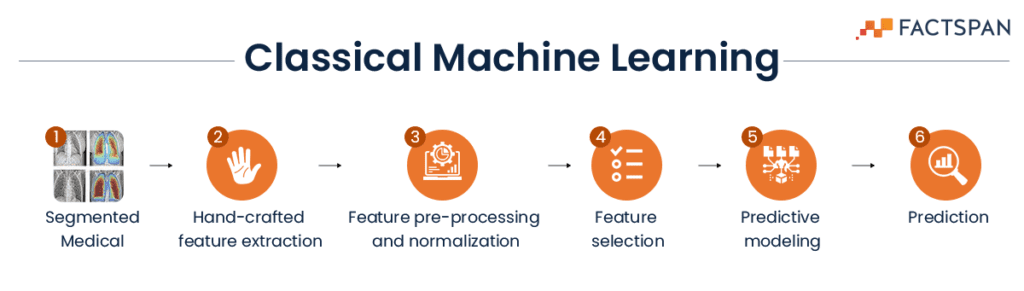

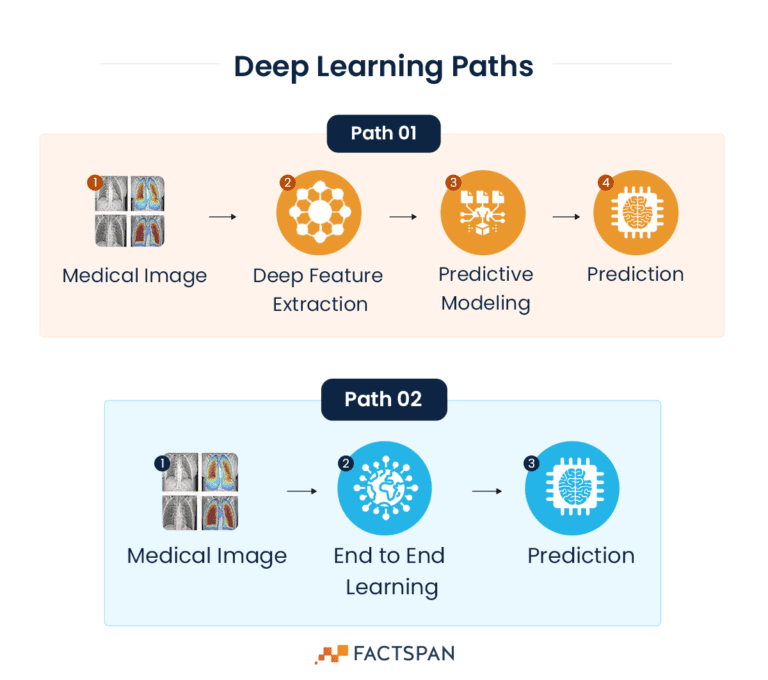

Whereas DL can follow two different paths which are more accurate and shorter than classical ML paths

Alternate approach

We can also build a solution using EfficientNet model.

EfficientNet is a family of image classification models developed by Google Research. As the name suggests, the EfficientNet is all about improving accuracy and efficiency, both in terms of computational resources and memory usage, through AutoML and model scaling techniques.

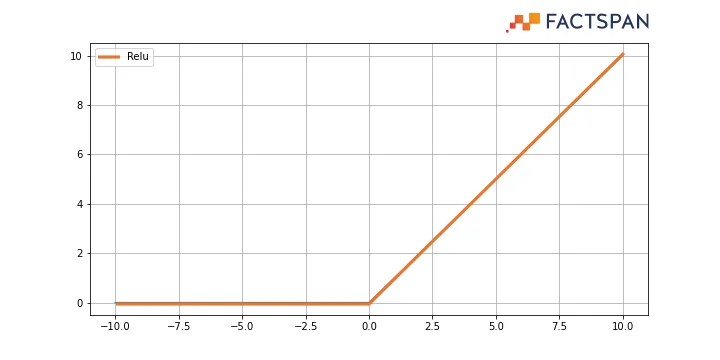

In this approach, EfficientNet is used as a base model and over that a dense layer is added with “ReLu” activation.

ReLu, a rectified linear unit widely used in neural networks, eliminates the negative values. Basically, it is a ramp function which works like a half wave rectifier (converting one-half cycle of the AC input to DC output) in electrical engineering.

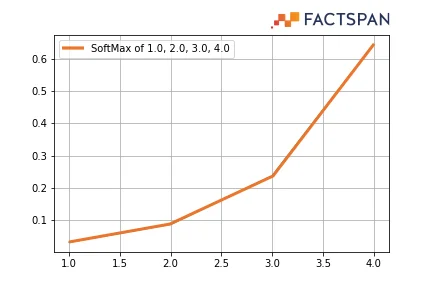

Finally, the output can be activated with “SoftMax” activation and “categorical crossentropy” to work as the loss component.

SoftMax provides a probability distribution over the classes, thereby making it easier for neural networks to interpret the output. It is a normalized exponential function that converts a vector of n real numbers into a probability distribution of n possible outcomes. Categorical cross-entropy is used when true labels are one-hot encoded.

The dataset used for training the model can be balanced with augmented images for eliminating any biases in the dataset. An adaptive learning rate can be used to detect consecutive three epochs – that if the percentage improvement of accuracy is positive or negative, and based on that result, the model can automatically change its learning rate.

For added features, after five epochs, the model can switch to fine tuning which in turn provides a high level of accuracy in detecting anomalies in Medical Imaging. It can then be tested with MRI dataset and Skin diseases dataset. The evaluation parameters that can be used for understanding the efficiency includes Precision, recall, f1-score, and support.

Managing Patient Data with NLP Chatbot and UI

By implementing an NLP Chatbot and interactive UI, hospitals and doctors can manage patient data, leading to two benefits. First, patients can track their progress reports without visiting a clinic or hospital, reducing crowds and increasing the availability of appointments.

Second, a managed database can aid doctors in designing medication for individual patients.

Success till date and future scope

Researchers have shifted from statistical and population-based forecasts to individual predictions, allowing for more effective preventive and therapeutic measures. Examples of therapeutic interventions include the use of medical imaging technology to detect and treat cancer.

In 2022, an Omaha-based medical imaging company, Bot Image.ai, received FDA approval for its software “ProstateID”. ProstateID can detect prostate cancer accurately using pre-processed MRI.

Another solution that received FDA approval in 2022 is Neosoma HGG, which provides 95.5% accuracy in detecting brain tumors. While the development and acceptance of this technology is still in its early stages, it shows promise for future medical advancements.

Want to deploy deep learning solutions to enhance your anomaly detections? We are eager to help you out. Contact us to brainstorm your solution.